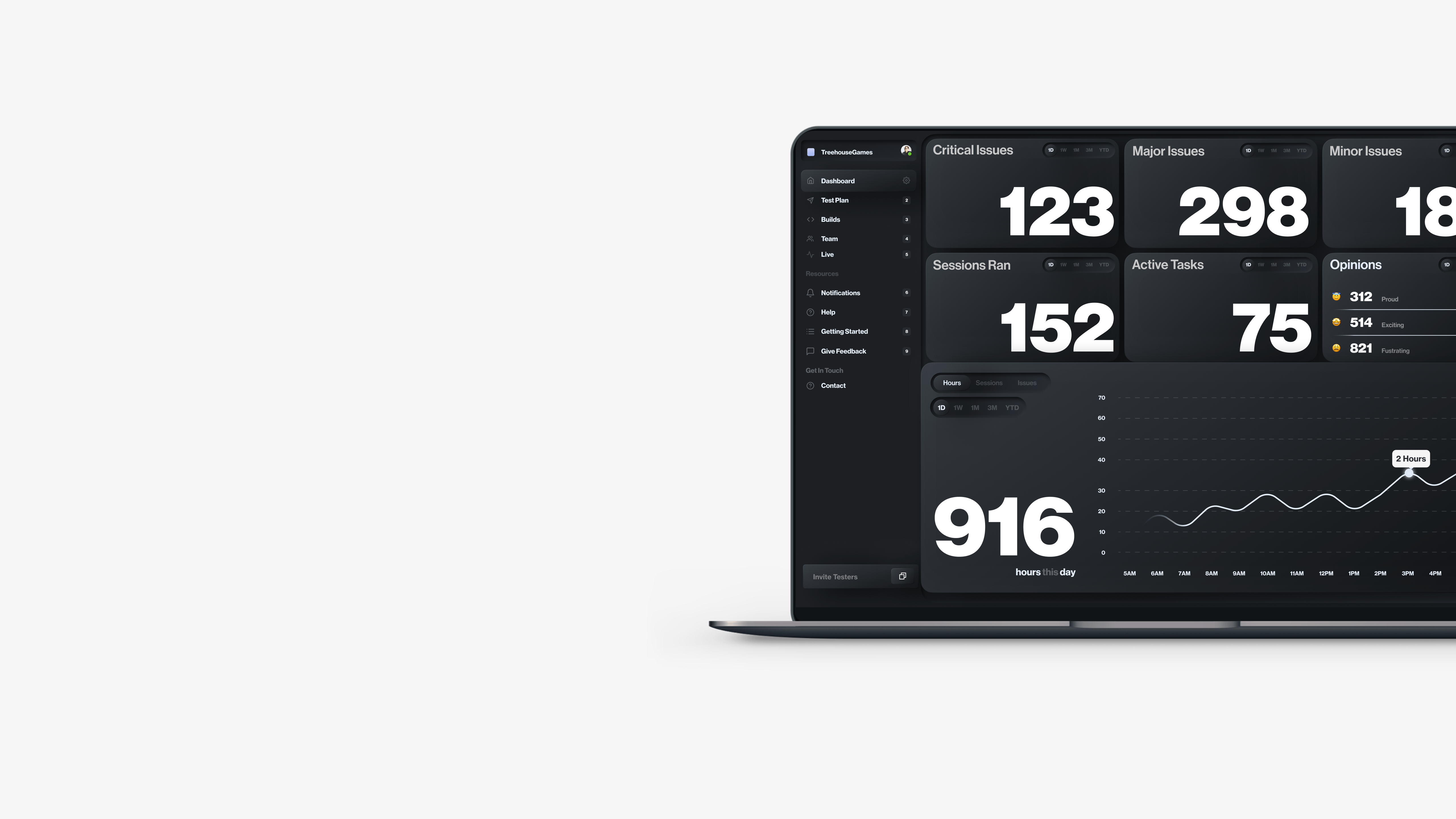

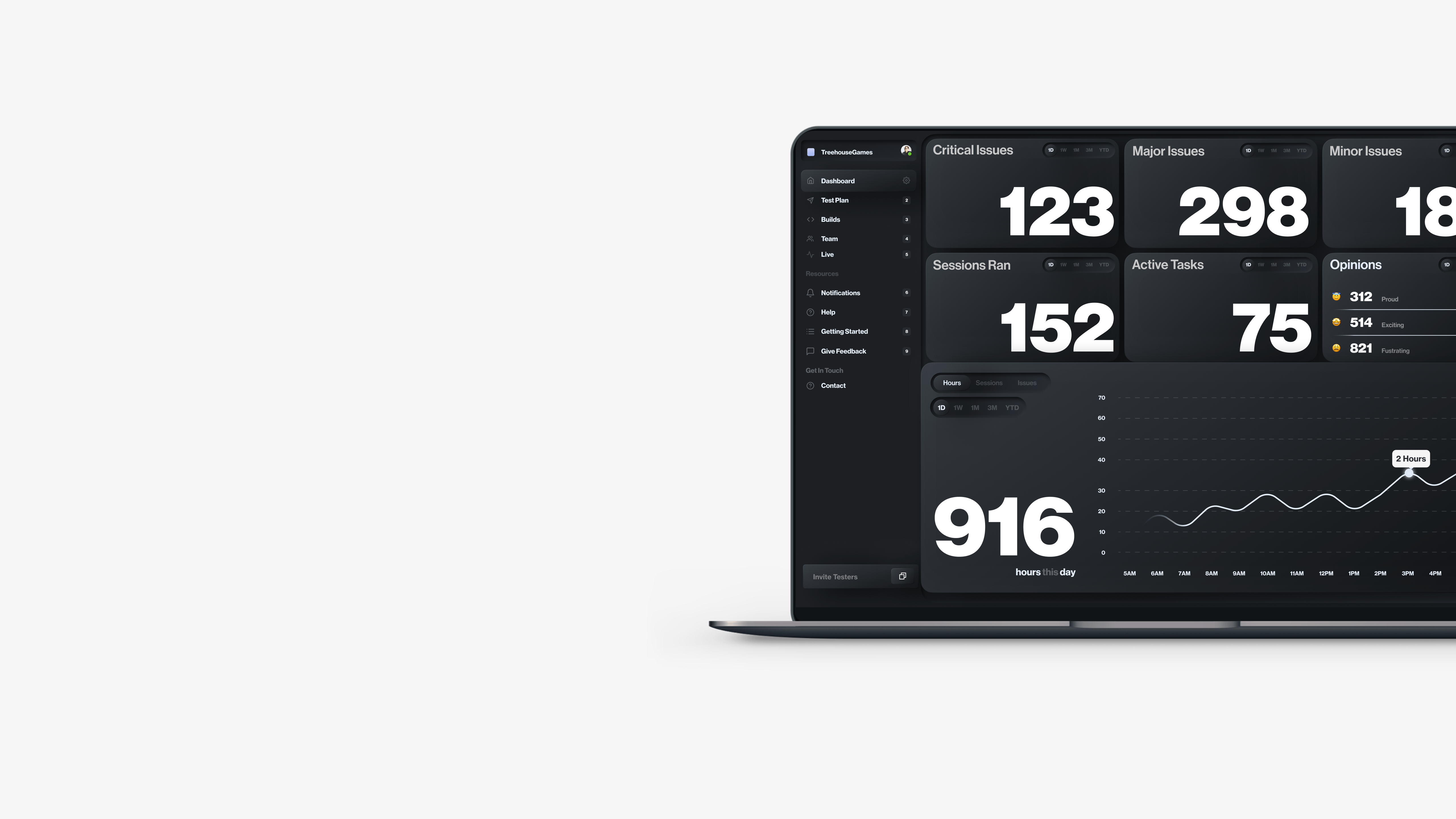

Dashboard

Have easy high level access to everything that has been happening with game to insure things are going according go plan.

Build Management

Where all builds are managed and maintained. Once a build is uploaded, tasks can can assigned to that build, along with testers as well allowed leads to do everything in one screen.

Annotation Review

There screen where the QA lead would review issues that the tester found along with details of the hardware they were using. If the QA lead decided this was a bug they could one click and create a jira ticket for development, or simply dismiss the issue.

Team Management

This screen allows for the QA admin to review stats about their testers that they simply wouldn’t have access to before hand. Sometimes game studios would simply just outsource QA testing and then get hit with a bill for X hours, with a rough breakdown on where those hours went, and no way to prove if this is true or not.

Test Scheduling

This screen allows for QA leads to be able to plan their tasks well in advance so that when a fresh build comes from development, they can easily upload it here, and quickly assign pre-populated tasks they have scheduled for the day.

Test Plan

This screen is simply just a google sheet. Didn’t want to try and reinvent the wheel here. Almost every QA lead I spoke with had their test plan in a good sheet, so I wanted this to have a similar UX pattern to what they were used to. These datasets can range from 500 - 3000 entries, which means we needed a powerful filtering system so our users can easily find what they are looking for.

Test Plan - Filters

We allowed our users to create custom filters that they could save for easy access in the future. This allowed our users to surface up rather quickly the task that they are looking for without having to scroll through hundreds or thousands of entries which would be a nightmare.

Test Plan - Filters

We allowed our users to create custom filters that they could save for easy access in the future. This allowed our users to surface up rather quickly the task that they are looking for without having to scroll through hundreds or thousands of entries which would be a nightmare.